Does re-posting someone’s article violate Google’s Quality Guidelines?

In a nutshell – yes, this is violating Google’s Quality Guidelines, assuming you don’t know the guidelines and haven’t taken the necessary steps to avoid creating duplicate content. Since that wasn’t a part of your question, I am assuming you haven’t taken the steps necessary to prevent it from being duplicate content. The details on how to avoid the duplicate content penalty come a little farther down. For now, let’s do a little review of the basics and background on duplicate content and content syndication.

What is Content Syndication?

Content Syndication is the assignment of the right to re-post content by from one website, the original publisher, to one or more other websites (the syndicates). Syndication is a way of defining the relationships between all involved parties – the publisher and those who republish the content.

What is Duplicate Content?

Duplicate content, as a term used for SEO, describes content on one webpage that is substantially similar to other webpages, whether in that page’s own website, or on another website. Duplicate content is measured as a percentage of the total text on the page. Some duplicate content, especially template-based text such as the author bio in a blog post, or other blocks of text that stay the same across similar pages on a website.

Essentially, Google feels it’s their job to ensure the web, and their search results, bring some sort of unique value to their users. Way back in the day they created an algorithm called Google Panda, which targets people who scrape or re-post content, and webpages that provide poor/thin or no-value content. Content Syndication was very common before Google existed, and so, it was natural for publishers to continue relationships with content syndicates after the web became a place to publish.

There is an expectation that a site that chooses to share someone’s content (aka a syndicate) first reads an article and gets something out of it that they want to share with their audience. Or, as with many large news sites, they write their version of the article with slightly different wording, quotes and info than their peers.

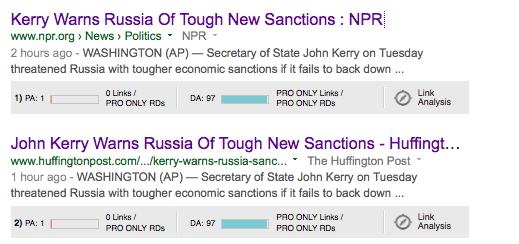

Here’s a great example:

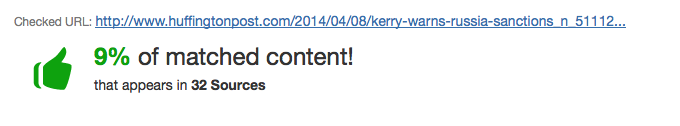

By simply reposting, without sharing that unique perspective, they would have created duplicate content instead of creating unique valuable content for their readers and for search engines. When you run these pages through a plagiarism checker (I prefer plagspotter.com) you can see the percent of duplicate content is below penalty (i.e., red) thresholds:

As you can see the Huffington Post does a much better job compared to NPR, however, since NPR ranks before the Huffington Post in the search result, you can safely assume that the duplicate content issues come from the other sites, and did not originate with NPR.

Re-post or Syndicate Content without Violating Google’s Guidelines

How to avoid getting hit with a Google Duplicate Content Penalty or Suppression Filter

Are you aware that Google has outlined Quality Guidelines for all website owners to follow in order to help keep the web a SPAM-free, and valuable experience for all of its users? If not, then how you re-post content could cause your post to be suppressed from search results, or, worse yet, could result in all of your webpages being removed from Google’s webpage index.

Go read Google’s Quality Guidelines. Google doesn’t accept “Sorry, I didn’t know they were there” as a reasonable excuse for violating its guidelines. Now, Google clearly explains that a penalty from Duplicate Content is rare, unless they feel it is being used to game search results. So, if you are not being hit with a penalty, then what is happening? Well, Google is simply suppressing your page because it is not the original, authoritative source. If a large portion of your content lost traffic over the last four years as a result of being scraped and re-posted, then Google has probably “re-valued” your posts, and pushed them down in search results. To fix that, you need to create more unique content, but that is a topic for another post.

If you see an article on another website that you feel is perfect for your audience, and decide you want to re-post this content on your blog so your audience can benefit from it as well, below are the current (April 2014) Google Quality Guidelines for this type of content syndication. Here are the basics:

1. Always link back to the original post

2a. Make the meta robots tag on the post say “noindex” so the page will not be indexed in Google, and you won’t get hit for duplicate content,

OR

2b. If you are a website owner, and want to attract an audience, then figure out what about the article has meaning for you and why, and instead of re-posting the content unchanged, write your take on the article and then link to the original article for your audience to read the specifics from the original publisher

What should I do if the site contacts me and asks me to take their content down?

Take it down.

There are a number of types of copyrights, and you should understand them before doing anything with someone’s property – and that includes website copy as well as images.

Did you notice the copyright stamp on the bottom of the publisher’s site when you copy and pasted their content to re-post? That’s not for show. That literally means that you have no rights to their copy without their permission.

What happens if I use someone’s content without permission?

The most likely result is that you get hit with a Google duplicate content filter on that specific page, and it simply will not rank in search results. If you do it often enough, Google may see your site as “scraping” other people’s content in order to benefit from their keywords to attract links and search traffic. That could get you a site-wide penalty and possible de-indexation.

However, if you are posting other people’s content without permission, any person or publisher can file a removal request against you with Google under the Digital Millennium Copyright Act, and Google will review and remove content it agrees is in violation.

If you make a habit out of scraping other people’s content without permission, and without adding any other substantially unique content to your website, then you will likely be removed from search results.

What about “Free Articles” offered for re-publication?

Basically, that is like taking candy from strangers. So, from my mother’s perspective, that candy might have razor blades in it, or have been laced with drugs or something. Okay, okay – so her paranoia (circa 1989) aside, this “free” content, by its nature, is potentially poison to your site. If the article is actually good, and you are willing to post it to your site, then everyone else who is looking for “free articles” (that comes to about 3600 searches a month, on average, according to Google’s Keyword Planner tool) has already had the same thought and execution.

Great Scott! That’s a lot of duplicate content! (Yes, I agree completely. Glad you are seeing things my way.) So, unless you find someone who will write you your very own “free article” that they guarantee is 100% unique and will not be posted anywhere else, you are way out of luck. And, by the way, if I am a copywriter that is taking the time to research and write a high-quality piece of content that can only be placed once, I am going to charge for it. Keep in mind that, when it comes to copy, you absolutely get what you pay for.

How do I find sites that have republished my content?

When it comes to finding duplicate content, I recommend using a duplicate content checker (I prefer plagspotter.com.) Go to the site, enter the URL of a specific page on your site (this is free), or check the whole site (this costs money), and check for duplicate content. It will show you other pages on the internet that show any percentage of your content, including a snippet of content that has been duplicated, a link to the page and what percentage of the page that snippet represents, as well as the total duplicate content percentage.

What should I do if someone DID Scrape my content?

First, be nice. I hear imitation is the sincerest form of flattery. Assume they don’t understand the rules, and just really liked your stuff. Email the webmaster and request that they follow the guidelines outlined in this post by either rewriting the piece to be their unique perspective, or making their meta robots tag “noindex” so the page is no part of the Google/Bing/Yahoo indices.

What if they don’t change the content or take it down?

Google has very helpfully provided a page to report this type of content problem.

What are the benefits of following Google’s Quality Guidelines?

Here’s my “In A Perfect World” Scenario

If syndicating-site.com were to only post other people’s content as a summation with their perspective or spin applied to it, they would create a site unique to the web, and appealing to a unique segment of their market that shares syndicating-site.com’s perspective (to one degree or another).

Assuming syndicating-site.com’s perspective is common among a sample of the population, a portion of other people may search for things in the site’s take on content-publisher.com’s content. In so doing, their site could attract a decent amount of visitors from search, backlinks of its own, resulting in referral traffic, etc.

What this does for the Content Syndicate

Assuming they are attracting those visitors based on content that is applicable to their intended audience:

1. If syndicating-site.com only provides unique value, they will attract more links to their own content and have more authority;

2. They avoid a duplicate content penalty from search engines

3. The links they are getting provide them with referral traffic and social traffic, as well as credibility and brand awareness

4. Syndicating-site.com can become an authority in their own right, over time

What this does for the Content Publisher

1. Having a high authority site (syndicating-site.com) promote content-publisher.com increases credibility and brand awareness for the Content Publisher, as well as page and domain authority

2. This will increase the amount of link juice passed through the link on syndicating-site.com to the original article on content-publisher.com

3. Assuming syndicating-site.com and content-publisher.com share a similar audience, this should result in referral traffic from syndicating-site.com to content-publisher.com

4. All of this together increases the Content Publisher’s search and referral traffic, will likely increase social follows and – inadvertently – conversions.

So, having these content syndication sites follow Google’s Quality Guidelines results will help both the syndication site and the content publisher in search results.